The future of AI is on blockchain

At the beginning of my professional career, I used to work as a Data Scientist, and one of my early projects was the analysis of raw human genome data from patients with Alzheimer’s Disease. Many things were painful back then; we had to recruit participants 1-by-1 to enroll in our study, sequencing the genomes to get the data cost us >1m from a research grant, we had to set up a costly compute cluster ourselves, and even simple regression analyses took days to finish (per iteration). I especially remember working for weeks on engineering our data structures, optimizing database settings, and manually re-writing the analysis algorithms (because we exceeded our RAM limits), first just for the analysis to compute at all, and then to finish in days instead of months. A lot has changed since then.

The three currently most prominent enterprise technologies are without doubt AI, blockchain, and IoT, and the driving factor behind them is data; people even go so far to proclaim that “data is the new oil”. New technologies enable collection, sharing, analysis of data, and automation of decisions based on them in ways that haven’t been possible before in what is essentially a data value chain.

Out of the three, blockchain technology is what assembles the pieces and there is a whole ecosystem of data-driven blockchain projects emerging. This decentralized ecosystem is set to help incentivize people to contribute data, technical resources, and effort:

- 1st generation projects have been focussing on creating the data infrastructure to connect and integrate data, e.g. IOTA, IoT Chain, or IoTex for data from connected IoT devices, or Streamr for streaming data.

- 2nd generation projects have been working on creating data marketplaces, e.g. Ocean Protocol, SingularityNet, or Fysical and crowd data annotation platforms, e.g. Gems or Dbrain.

- With solutions covering the first steps on this data value chain maturing, my friends @sherm8n and Rahul started to work on Raven Protocol, a first 3rd generation project that will close an important gap at the analyze stage: Compute resources for AI training.

A recent OpenAI report showed that “the amount of compute used in the largest AI training runs has been increasing exponentially with a 3.5 month-doubling time”, this is a 300.000x increase since 2012.

The immediate consequences from this are:

- Higher costs, as used compute is increasing faster than supply

- Longer lead times for new solution, as model training takes longer

- Increased market entry barriers based on access to funding & resources

These consequences can proof dire for smaller firms and researchers, limiting their ability to create competitive models without significant funding. And even with funding, they might be blacklisted from resources if they’re considered competition by the providers.

But big corporations will feel the costs as well considering both the growth rate of resources and the growth rate of their AI efforts multiplied. I spoke to a few Chief Data Officers of Fortune 500 firms over the past months, and while they don’t consider it an issue yet, even they have to agree that they can invest their money in better ways than in bought-in HPC resources.

The beauty of blockchain ecosystems is that they allow to tap into otherwise unused resources, trade resources that would not have been tradable, and allow people to participate in a market that otherwise could not participate. From an economic perspective, it improves the leverage on existing resources.

Where 1st & 2nd generation data blockchain solutions used this to lower the barrier for access to annotated quality data, Raven Protocol is going to solve the training cost challenge. It is closing the gap that would have prevented the proverbial chain to hold, that is only as strong as its weakest link (hint: it’s the data value chain).

Together, the solutions in this blockchain data ecosystem create new opportunity and they lower cost. Especially the second is key as it lowers the entry barriers for new innovation, enabling more people to contribute and therefore potentially accelerating our progress as a society.

If this all sounds a bit abstract, just have a look at one area where AI can help: Healthcare. Our global healthcare system is in serious distress. Costs are exploding, they are expected to increase by 117% over the next 10 years despite already being at up to 18% of a country’s GDP. At the same time, research for new drugs is hitting a cliff.

Our healthcare systems needs lots of innovation to keep healthcare affordable, and there are many opportunities where AI solution can help; for this reason, healthcare is the industry with the highest investments in AI, and has been for years.

However, access to data and high costs create an entry barrier, which would limit research of new solutions to incumbents & other big players. The blockchain data ecosystem changes that and increases our chances of finding the right solutions in time. Raven Protocol is probably not the last, but an important stepping stone to make that work.

Author / copyright goes to Sebastian Wurst

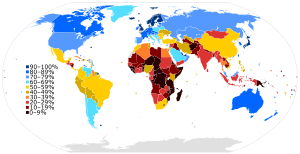

INTERNET USERS, SPEED, POTENTIAL IN INDIA

INTERNET USERS, SPEED, POTENTIAL IN INDIA  Google Pay

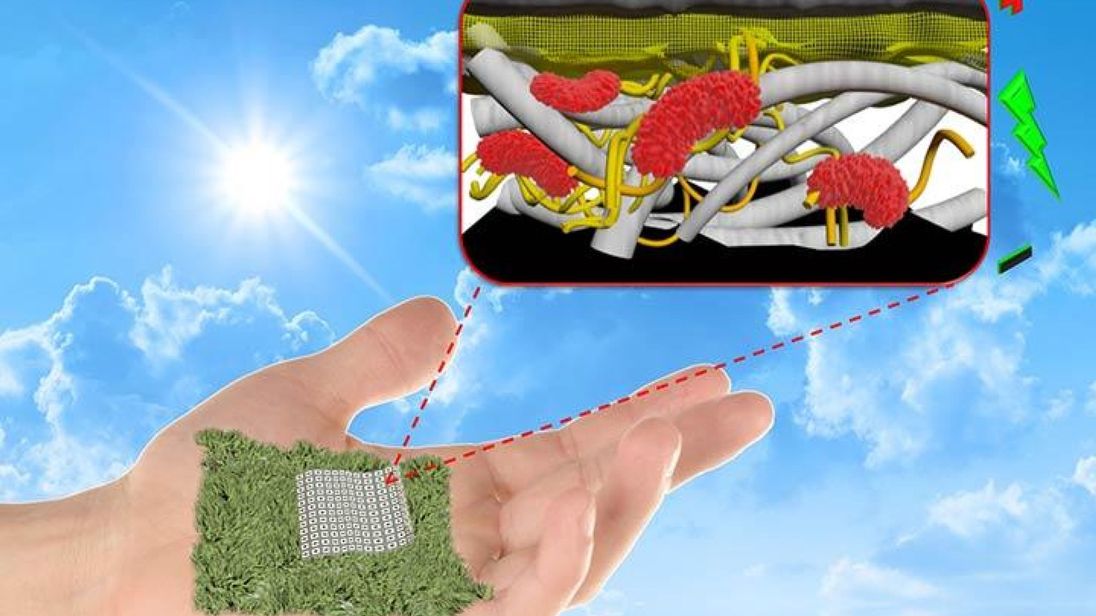

Google Pay  Scientists develop biodegradable batteries made out of paper

Scientists develop biodegradable batteries made out of paper  Samsung launches Fortnite for Android exclusive

Samsung launches Fortnite for Android exclusive  New Fund Offering by SBI MF – SBI Dividend Yield Fund

New Fund Offering by SBI MF – SBI Dividend Yield Fund  Bharti Airtel’s 5G user-base expands

Bharti Airtel’s 5G user-base expands  GK TODAY -HISTORY AT A GLANCE

GK TODAY -HISTORY AT A GLANCE